A new study from a team of researchers, including IBM, has found a way to possibly overcome a key challenge in quantum computing. (Image credit: Flavio Coelho via Getty Images) Share this article 0 Join the conversation Follow us Add us as a preferred source on Google Newsletter Sign up for the Live Science daily newsletter now

A new study from a team of researchers, including IBM, has found a way to possibly overcome a key challenge in quantum computing. (Image credit: Flavio Coelho via Getty Images) Share this article 0 Join the conversation Follow us Add us as a preferred source on Google Newsletter Sign up for the Live Science daily newsletter now Get the world’s most fascinating discoveries delivered straight to your inbox.

Become a Member in Seconds

Unlock instant access to exclusive member features.

Contact me with news and offers from other Future brands Receive email from us on behalf of our trusted partners or sponsors By submitting your information you agree to the Terms & Conditions and Privacy Policy and are aged 16 or over.You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

Explore An account already exists for this email address, please log in. Subscribe to our newsletterResearchers have achieved a new record for qubit fidelity in superconducting quantum computer systems — overcoming a key barrier in quantum computing.

In a study published Feb. 27 in the journal Nature Communications, scientists from IBM, RWTH Aachen University in Germany and Los Angeles-based startup Quantum Elements addressed quantum error correction and suppression, which is the largest hurdle to building machines more powerful than the fastest supercomputers.

Superconducting quantum computers use quantum bits (qubits), the quantum equivalent of a computer bit, to perform computations. The systems the researchers used — IBM's 127-qubit Kyiv and Marrakesh processors — employ a combination of "physical qubits" and "logical qubits," groups of entangled physical qubits that store the same information in different places, in case a physical qubit storing that information fails mid-calculation.

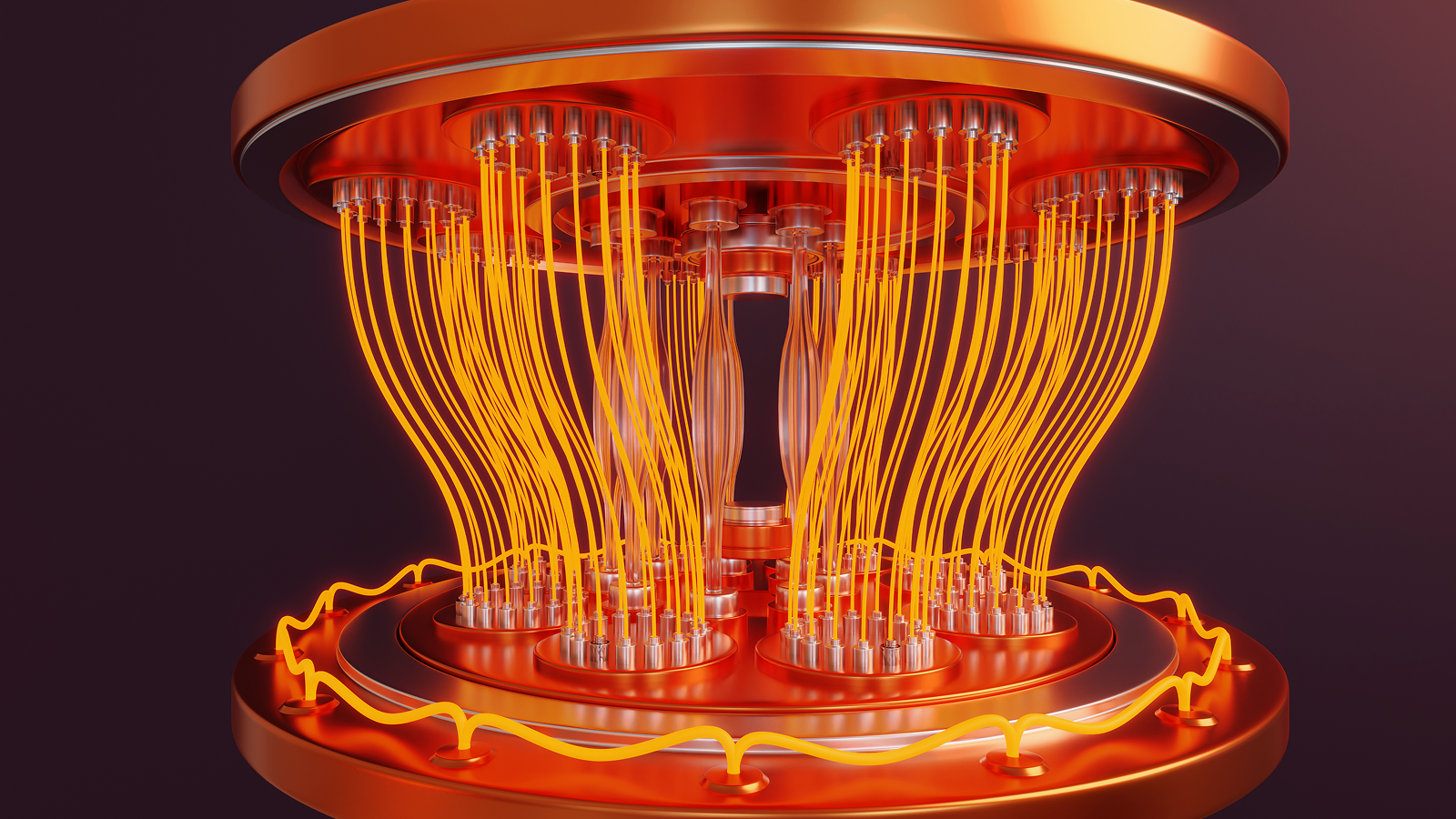

Physical qubits are embedded in a quantum computer's hardware layer as a complex, geometrically precise circuit made of superconducting metal. When cooled to near absolute zero, these metals lose all electrical resistance, allowing quantum information to flow without losing energy.

But these qubits are susceptible to the slightest perturbation, including vibration, local background noise and other environmental factors, making them brittle by nature. To compensate for this fragility, scientists group multiple physical qubits together to form a logical qubit.

When computations are performed across logical qubits, the physical qubits act as parity bits that eliminate errors. But the inherent problem with this setup, the scientists said in the new study, is that it's weak against "logical errors."

Logical errors occur when multiple physical qubits within a logical qubit succumb to noise. Essentially, when one physical qubit fails, the others act as a fail-safe against its erroneous signal. But when multiple qubits fail, the system treats the error they produce as the proper signal — and the calculation is ruined.

Sign up for the Live Science daily newsletter nowContact me with news and offers from other Future brandsReceive email from us on behalf of our trusted partners or sponsorsSuppressing errors before they happen

The 127-qubit IBM systems the researchers used are prone to a specific type of noise called "ZZ crosstalk," which is generated by the particular arrangement of its physical qubits.

The Quantum Elements team developed a hybrid approach to dealing with this specific type of noise. It involves suppressing crosstalk errors before they happen, thus reducing the overall number of undetectable logical errors that can occur. They coupled this technique with existing error-correction tools to create a novel hybrid protocol.

As a result, the researchers achieved the highest-fidelity quantum calculations — those with the lowest amount of noise — on superconducting qubits for the longest period of time on record.

According to the study, scientists had previously achieved a peak encoding fidelity of 79.5% in one attempt and 93.7% in another, which subsequently declined to approximately 30% after roughly 27 microseconds.

The peak-fidelity metric indicates the highest accuracy achieved within the quantum system, which occurs directly after the logical qubit's formation. The longer a quantum computer can hold peak or near-peak fidelity, the more capable it is at running quantum algorithms.

The team shattered those previous records, using a new technique called normalizer dynamical decoupling (NDD). They achieved 98.05% peak encoding fidelity, which maintained 84.87% fidelity after 55 microseconds.

The refrigerated part of a quantum computer, where qubits are kept at near absolute zero temperatures. (Image credit: Dragon Claws/Getty Images)Conventional dynamical decoupling, a standard error-correction technique, involves using microwave pulses to force physical qubits to flip back and forth. This regulates the qubits and generally averages out background noise, but it does so one physical qubit at a time.

But there's a problem with scaling up this technique: the more physical qubits there are in a system, the more microwave pulses you need to suppress the noise. Eventually, this creates additional noise and adds even more errors to the system, defeating the purpose, the study authors explained.

However, the scientists applied this paradigm to the logical qubit layer, rather than running it strictly at the hardware layer. To do this, they had to invent a method for tuning its pulses, using a mathematical "normalizer" based on the quantum code running on the machine itself. This allowed it to pulse in a rhythm correlating with the machine's code.

The result, normalizer dynamical decoupling, produced the highest-fidelity calculations on a superconducting quantum computer to date. The longer this level of high fidelity can be maintained, the more useful we can expect quantum computers to become.

RELATED STORIES— Schrödinger's cat-inspired qubits can be up 160 times more reliable thanks to 'squeezing' technique

The number of quantum gates — or single quantum operations — a quantum system can execute depends on how long it can maintain quantum fidelity. It typically takes about 10 to 12 nanoseconds for a single gate to execute. This means approximately 4,500 to 5,500 consecutive operations could occur in the 55 microseconds before the data degrades, as demonstrated in this study.

The ultimate goal of quantum computing is to create a device that can run at high fidelity long enough to perform truly useful operations, such as running Shor's algorithm to crack encryption. It's estimated that advanced functions such as these could one day take weeks or months for a capable quantum system to complete properly — which isn't that bad when you consider that it could take a classical computer hundreds of trillions of years to achieve the same result.

The record-breaking 55 microseconds of high-fidelity activity seems a far cry from achieving utility, but it represents a significant leap over previous efforts.

Article SourcesVezvaee, A., Tripathi, V., Morford-Oberst, M., Butt, F., Kasatkin, V., & Lidar, D. A. (2026). Demonstration of high-fidelity entangled logical qubits using transmons. Nature Communications. https://doi.org/10.1038/s41467-026-70011-3

Think you know all about computers? Test your knowledge with our computer quiz!

Tristan Greene

Tristan GreeneTristan is a U.S-based science and technology journalist. He covers artificial intelligence (AI), theoretical physics, and cutting-edge technology stories.

His work has been published in numerous outlets including Mother Jones, The Stack, The Next Web, and Undark Magazine.

Prior to journalism, Tristan served in the US Navy for 10 years as a programmer and engineer. When he isn’t writing, he enjoys gaming with his wife and studying military history.

View MoreYou must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Logout LATEST ARTICLES 1New tweak to Einstein's relativity could transform our understanding of the Big Bang

1New tweak to Einstein's relativity could transform our understanding of the Big Bang- 2Artemis II officially leaves Earth's orbit on the way to the moon

- 3In photos: Artemis II's historic launch for the moon

- 4Ancient children's teeth reveal a syphilis-like disease was spreading in Vietnam 4,000 years ago

- 5Chemists discover groundbreaking reaction that turns breadcrumbs into hydrogen