A hacker used Anthropic's Claude Code and OpenAI's GPT-4.1 AI systems to steal hundreds of millions of records from the Mexican government. (Image credit: Bloomberg via Getty Images) Share this article 0 Join the conversation Follow us Add us as a preferred source on Google Newsletter Sign up for the Live Science daily newsletter now

A hacker used Anthropic's Claude Code and OpenAI's GPT-4.1 AI systems to steal hundreds of millions of records from the Mexican government. (Image credit: Bloomberg via Getty Images) Share this article 0 Join the conversation Follow us Add us as a preferred source on Google Newsletter Sign up for the Live Science daily newsletter now Get the world’s most fascinating discoveries delivered straight to your inbox.

Become a Member in Seconds

Unlock instant access to exclusive member features.

Contact me with news and offers from other Future brands Receive email from us on behalf of our trusted partners or sponsors By submitting your information you agree to the Terms & Conditions and Privacy Policy and are aged 16 or over.You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

Explore An account already exists for this email address, please log in. Subscribe to our newsletterNine Mexican government agencies were hacked in an artificial intelligence (AI)-driven cyber campaign between December 2025 and mid-February 2026 in what researchers have said should "serve as a wake-up call."

According to researchers at cybersecurity company Gambit Security, a small group of individuals used Anthropic's Claude Code and OpenAI's GPT-4.1 to breach both federal and state government agencies and abscond with millions of personal citizen records. Gambit Security representatives outlined the attack in a blog post Feb. 24, which they followed up with a technical report April 10.

To sort through the huge pile of files and decide what to steal, the attackers used more than 1,000 prompts — written requests sent to the AI tools — which led to more than 5,000 commands executed during the operation.

This latest attack reveals how AI may be reshaping cybercrime by helping small groups carry out hacks with the speed and scale of a larger crew, Sela said in the report. AI can both exploit weaknesses already in the digital framework and process the stolen information with more efficiency.

AI-assisted attack

Over two and a half months, the hackers used more than 400 custom attack scripts, as well as a large program that helped process information stolen from hundreds of internal servers. Claude appears to have done most of the heavy lifting during the hands-on phase of the intrusion, with Gambit representatives saying that about 75% of the remote hack activity was generated and executed by the model. However, Claude's programming didn't make the process easy.

"Throughout the campaign, Claude refused or resisted certain requests — questioning the legitimacy of operations, requesting authorization evidence, and declining to generate specific tools," Sela said.

Sign up for the Live Science daily newsletter nowContact me with news and offers from other Future brandsReceive email from us on behalf of our trusted partners or sponsorsRELATED STORIES- Scientists create new type of encryption that protects video files against quantum computing attacks

- Experts divided over claim that Chinese hackers launched world-first AI-powered cyber attack — but that's not what they're really worried about

- Popular AI chatbots have an alarming encryption flaw — meaning hackers may have easily intercepted messages

Although AI chatbots are programmed to refuse to help with potentially harmful requests, some users have been able to "jailbreak," or override, these refusals. In this hack, the researchers found that it took the hackers only 40 minutes to jailbreak Claude's guardrails. Once inside those limits, Claude helped find security weaknesses to exploit and coding tasks to steal the data, the researchers said.

ChatGPT was used to help make sense of the stolen documents, with the attackers building a 17,550-line Python tool that moved data through it, producing 2,597 reports of the data stolen from 305 internal servers. The hackers then fed those reports back to Claude to learn from, violating both companies' terms of use for their AI systems.

"Recovering from this attack will take weeks to months; rebuilding trust will likely take years," Gambit's chief strategy officer, Curtis Simpson, said in the blog post. "The attackers in this scenario may have been focused on government identities and backdoors to create fraudulent identities but, considering the level of compromise achieved, this could have just as easily resulted in all data being eliminated and the systems being rendered unrecoverable."

Kenna Hughes-CastleberryContent Manager, Live Science

Kenna Hughes-CastleberryContent Manager, Live ScienceKenna Hughes-Castleberry is the Content Manager at Live Science. Formerly, she was the Content Manager at Space.com and before that the Science Communicator at JILA, a physics research institute. Kenna is also a book author, with her upcoming book 'Octopus X' scheduled for release in spring of 2027. Her beats include physics, health, environmental science, technology, AI, animal intelligence, corvids, and cephalopods.

View MoreYou must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

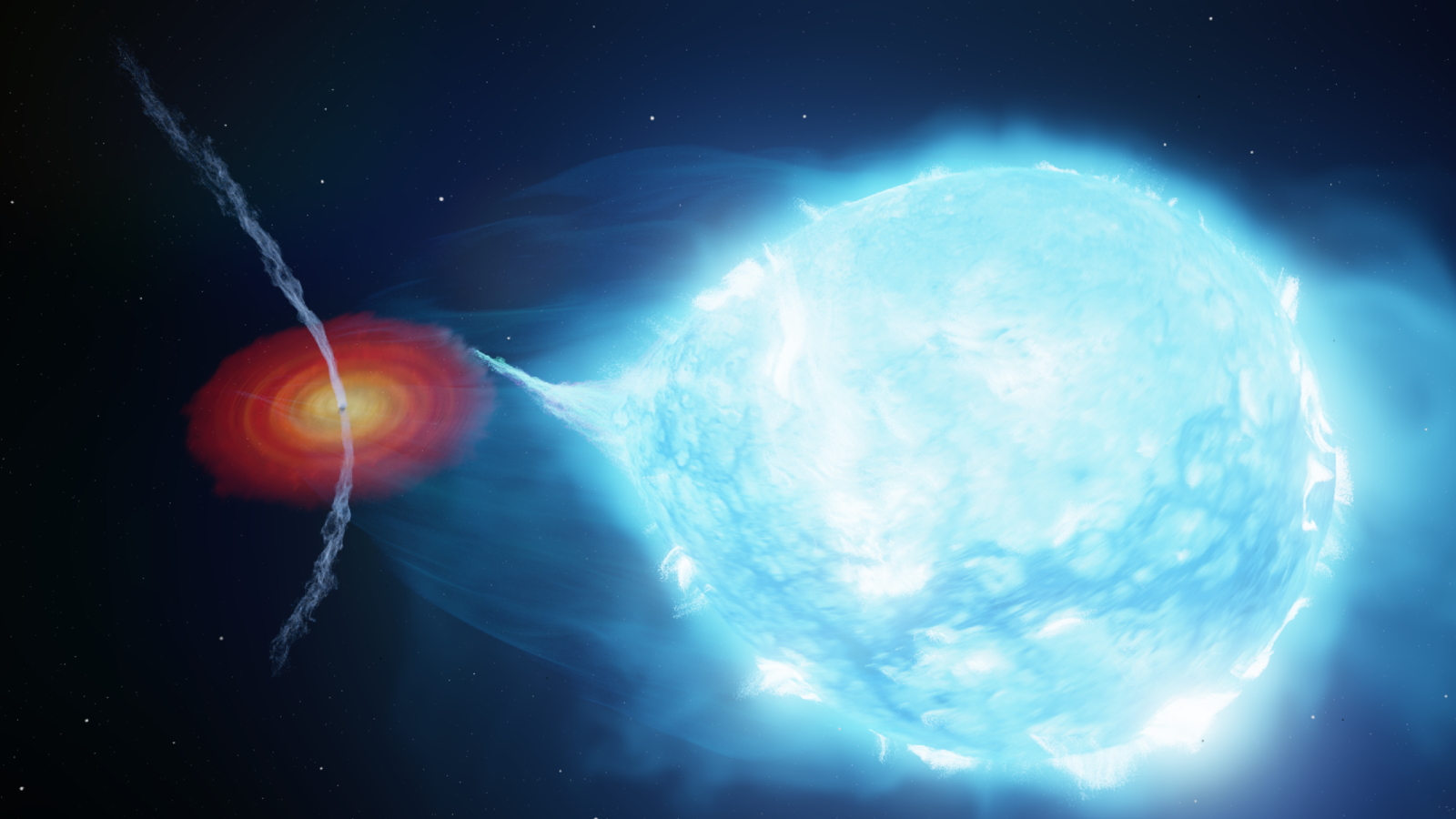

Logout LATEST ARTICLES 1The first black hole ever discovered is spewing 'dancing jets' at half the speed of light

1The first black hole ever discovered is spewing 'dancing jets' at half the speed of light- 2Stephen Hawking's black hole paradox could be solved — if the universe has 7 dimensions

- 3'Something's missing': Most thorough-ever study of the cosmos proves we still can't explain how the universe is expanding

- 4'Human evolution didn't slow down; we were just missing the signal': Large DNA study reveals natural selection led to more redheads and less male-pattern baldness

- 5Artemis II quiz: Is your knowledge of NASA's historic moon mission out of this world?